Live streaming is complicated. The entire process of broadcasting a stream, transporting it over the internet, and maintaining good video quality involves a series of methods with a variety of formats that can be employed. One important component is the codec used for encoding and decoding the media file. The codec also defines the types… Continue reading 6 Points of Comparison for VP9, H.265, or H.264

Live streaming is complicated. The entire process of broadcasting a stream, transporting it over the internet, and maintaining good video quality involves a series of methods with a variety of formats that can be employed. One important component is the codec used for encoding and decoding the media file. The codec also defines the types of tools that can be used to conduct the streaming.

To put it simply, in order for a video to be streamed over the internet, we must first capture the audio and video using a microphone and camera. Then that raw data must be compressed (encoded) into a codec, broadcast over an internet connection (using a transport protocol), sent to some kind of server-side solution (typically a CDN or a cloud-based cluster like Red5 Pro), and subsequently decompressed (decoded) for the subscriber to finally watch the video.

There are quite a few codecs currently in use today, including VP8/9, H.264 (AVC / Advanced Video Coding), H.265 (HEVC / High Efficiency Video Coding ), and AV1. In previous posts, we covered AV1 and HEVC, so this post will focus mainly on VP8/VP9. We are lumping VP8 in with VP9 as they are similar in regards to licensing, and VP9 is the evolution of VP8.

Although we’ll mostly focus on VP9 versus H.265, the overarching concern is to explore the best codec to use for both hardware- and software-based streaming. Ultimately, we will present the case for why H.264 continues to be a more effective choice for low latency live streaming.

What is VP9?

VP9 codec is a royalty-free, open-source video coding standard developed by Google. The journey of VP9 began with the acquisition of On2 Technologies by Google in 2010. On2 Technologies, known for their pioneering work in video compression technology, had developed the VP8 codec, which served as the cornerstone for the next leap in video coding. Recognizing the potential to drive the future of video streaming, Google embarked on an ambitious project to enhance and refine this technology, leading to the birth of VP9. By open-sourcing the codec, Google democratized access to state-of-the-art video compression, ensuring that it could be freely used and integrated by developers and content creators around the globe.

The VP9 codec emerged as a royalty-free, open-source competitor to closed-source codecs like H.265. It was designed to meet the demands of modern video content and significantly improve coding efficiency over its predecessor, VP8.

VP9 introduced several significant technical improvements over its predecessor VP8, aimed at increasing compression efficiency, enhancing video quality, and optimizing performance for a wide range of devices and network conditions. Some of the key advancements include:

- Improved Compression Efficiency: VP9 offers better compression than VP8, allowing for reduced file sizes without sacrificing video quality and detail. This is particularly beneficial for 4K and higher resolutions, where bandwidth efficiency is crucial.

- Higher Resolution Support: VP9 supports ultra-high-definition video content, enabling more efficient streaming of 4K and higher resolution videos than VP8. This makes it well-suited for platforms that demand high-quality video experiences.

- Adaptive Block Size: Unlike VP8, which uses a fixed block size, VP9 can adaptively choose from a range of block sizes (from 4×4 to 64×64 pixels) for more efficient coding. This flexibility allows VP9 to better handle a variety of content types and details within a video frame.

- Tile-based Parallel Processing: VP9 introduces tiling, which divides the video frame into smaller, independent regions that can be processed in parallel. This feature improves encoding and decoding performance, especially on multi-core processors, and facilitates error resilience in streamed content.

- Enhanced Prediction Modes: VP9 includes more advanced prediction modes for both intra-frame and inter-frame (motion) predictions. These improvements help in reducing redundancy and achieving higher compression ratios by better predicting pixel values within frames.

- Asymmetric Motion Partitions: VP9 allows for more complex motion vectors with its asymmetric motion partitioning feature, enabling more precise motion estimation and compensation. This leads to better handling of motion in videos, improving the quality of fast-moving scenes.

- Multi-threading Support: VP9 is designed to take better advantage of multi-threading capabilities in modern processors, enhancing the speed and efficiency of video encoding and decoding processes.

- Improved Loop Filters: VP9 includes enhanced loop filtering techniques, which help in reducing blockiness and other compression artifacts, resulting in smoother and clearer video playback.

This leap in efficiency was crucial for accommodating the burgeoning trend of ultra HD (UHD) content on platforms like YouTube. With 4K video rapidly becoming the standard for content consumption, the need for a codec that could provide high-quality video without the hefty bandwidth requirements of previous standards was more pressing than ever.

What is H.265?

The H.265 codec, or High Efficiency Video Coding (HEVC), was developed through a joint effort by the Video Coding Experts Group (VCEG) and the Moving Picture Experts Group (MPEG). In April 2013, the HEVC Standard was approved as the official successor to H.264, also known as Advanced Video Coding (AVC). We cover more details on H.264/AVC below, but first, let’s discuss the advantages of H.265.

HEVC improves upon the compression efficiency of H.264 (AVC) by adding algorithms that reduce the size of the video content by around 50%. It uses coding tree units (CTUs), which can be substantially larger than the macroblocks used in H.264. This allows for more efficient data organization and compression, particularly for high-resolution videos. Overall, HEVC introduces improved intra-frame prediction, sophisticated entropy coding, and advanced motion vector prediction mechanisms. These innovations collectively contribute to HEVC’s ability to deliver unparalleled compression efficiency without sacrificing video quality.

The use of coding tree units as an intra-frame approach was one of the more significant improvements over AVC’s approach. In both solutions, the current frame is divided into smaller blocks or units. In H.264, these are called macroblocks (usually 16×16 pixels), whereas H.265 uses CTUs that can vary in size (e.g., 64×64, 32×32).

The efficiency of intra-frame prediction comes from its ability to significantly reduce the amount of data needed to represent a frame by exploiting spatial redundancy. The quality of the prediction and the compression efficiency heavily depend on the encoder’s ability to choose the most appropriate prediction mode for each block.

However, there is a trade-off between compression efficiency and computational complexity. More sophisticated prediction techniques used in H.265/HEVC can achieve higher compression but require more processing power, which can be a consideration for real-time applications or devices with limited computing resources.

VP9, unlike H.265/HEVC, does not use CTUs as defined in the HEVC standard. Instead, VP9 employs a block structure that is somewhat similar but has unique characteristics and terminology. VP9’s approach to block partitioning and intra-frame prediction is designed to enhance coding efficiency while maintaining flexibility and simplicity in its coding structure.

More importantly, VP9’s intra-frame prediction is optimized to strike a balance between compression efficiency and computational complexity, making it highly effective for a wide range of applications, from streaming video content at various resolutions to enabling efficient playback on devices with limited processing power. This means that it can be better choice in many instances, including real-time streaming, particularly when using software (as opposed to dedicated hardware) to encode the live real-time video stream.

Technology aside, one of the biggest differences between HEVC and VP9 is the license. From a licensing standpoint, the primary difference between HEVC (High Efficiency Video Coding) and VP9 lies in their approach to royalties and accessibility. HEVC is managed by several patent pools, including MPEG-LA, Velos Media, and HEVC Advance, and requires licensors to pay fees for commercial use, making it potentially costly for some users. In contrast, Google offers VP9 as an open-source codec, available royalty-free to encourage widespread adoption and integration, presenting a more accessible option for developers and content providers looking to implement high-efficiency video coding without the burden of licensing fees. Having a free high-performance codec for use in Google Chrome was likely one of the main reasons for Google to offer it as open source.

What is H.264?

H.264, or AVC (Advanced Video Coding), as explained above, is currently the most widely adopted video codec. It was used by 91% of video industry developers as of September 2019. Like H.265, H.264 was also developed by the Moving Picture Experts Group (MPEG) as an improvement over previous standards with an aim to deliver efficient compression and high-quality video content over the internet.

While both VP9 and H.265 allow for videos to be streamed at higher resolutions at a much lower bandwidth, the ubiquity of H.264 support often outweighs this advantage. More on this later.

H.264 is protected by many patents and licensed by the MPEG-LA organization. However, a widely used free open-source encoder and decoder called openH264 was made available for the general public by Cisco Systems in 2013. In other words, Cisco paid for the patent licenses for all of us to use. This, in turn, created wide adoption of the H.264 codec, and implementations of openH264 showed up in all the web browsers.

For a very detailed overview of H.264 take a look at this post by VideoProc.

Now that we have introduced the codecs, let’s examine how they compare to each other. We’ve put together a list of 6 key factors to evaluate each codec.

Encoding Quality

There isn’t much difference between VP9 and H.265 in terms of encoding quality. The video tends to be about the same quality, and both provide similarly efficient compression. However, H.265 slightly outperforms VP9 when the bit rates are high, and VP9 out performs in H.265 in lower bitrate settings.

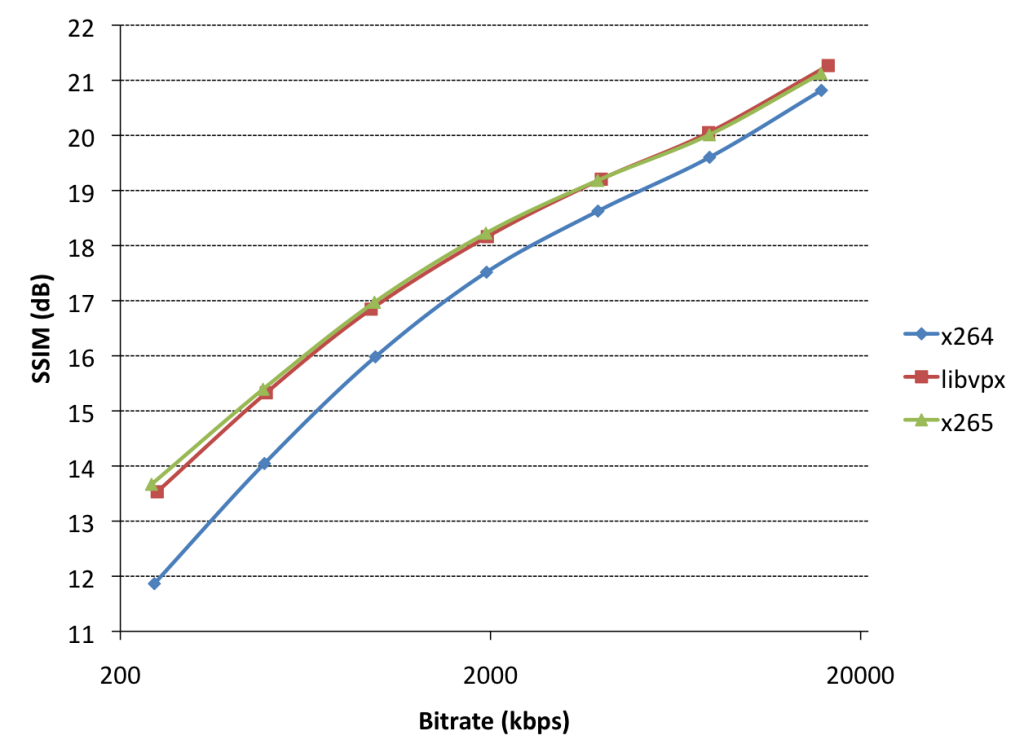

In order to judge the image quality, we can use the SSIM (Structural Standard Index Measurement) metric as displayed below. When broadcasting a stream over the internet, the process of compressing and expanding (encoding and decoding) the visual data contained in the stream can result in slight distortions as the decoder extrapolates the data to display it. SSIM essentially measures how accurate the transported image is after being encoded and decoded.

VP9 and H.265 show similar results, while with H.264, there is a little bit more of a difference.

As we discussed in some detail already, part of the way that VP9 and H.265 are able to increase compression is through the use of larger macroblocks. A macroblock is a processing unit of an image or video that contains the pixels of the image to be displayed. H.264 uses 16 x 16 macroblocks while VP9 and H.265 use 64×64 blocks. Those macroblocks undergo a computation series called “intra-prediction directions” to rebuild the original image, only with slightly less detail in non-crucial areas. This enables VP9 and H.265 to increase efficiency as less detailed areas of the image, such as the sky or a blurred background, are not broken up into smaller units. The detail lost in these areas does not substantially decrease the overall quality of the image, as the important sections are rendered in more detail. It should also be noted that as you increase the bitrate, the difference in quality between AVC (H.264) and the two other codecs gets smaller.

H.264 produces a poorer image, particularly at lower bitrates. When comparing images run at the same bitrate, both VP9 and H.265 are more detailed and crisp than the images produced with H.264. In other words, in order to produce the same quality image of VP9 or H.265, H.264 would need to run at a higher bitrate. However, the difference in quality, while perceivable, is not necessarily an outright problem. To measure this more objectively, we can take a look at the SSIM numbers, which show that the results for H.264 are pretty close to VP9 and H.265. Thus while H.264 might not be as good in regards to image quality, the difference isn’t enough to overcome the big tradeoff detailed below.

We should also point out that other factors, such as improved sub-pixel interpolation and motion vector reference selection (motion estimation), improve image quality as well. This is because they help predict what the next frame will look like in a movie. Those are pretty complicated concepts deserving their own articles, so we will leave it at that.

Winner: VP9 and H.265 tie (same quality and similar efficiency)

Encoding Time

In order to achieve a higher compression rate, VP9 and H.265 need to perform more processing. All that extra processing means that they will take longer to encode the video. This will negatively impact latency as all that additional time spent processing will delay the video from being broadcast. Keeping latency low is important for ensuring that live video streams can provide an interactive experience, among other reasons.

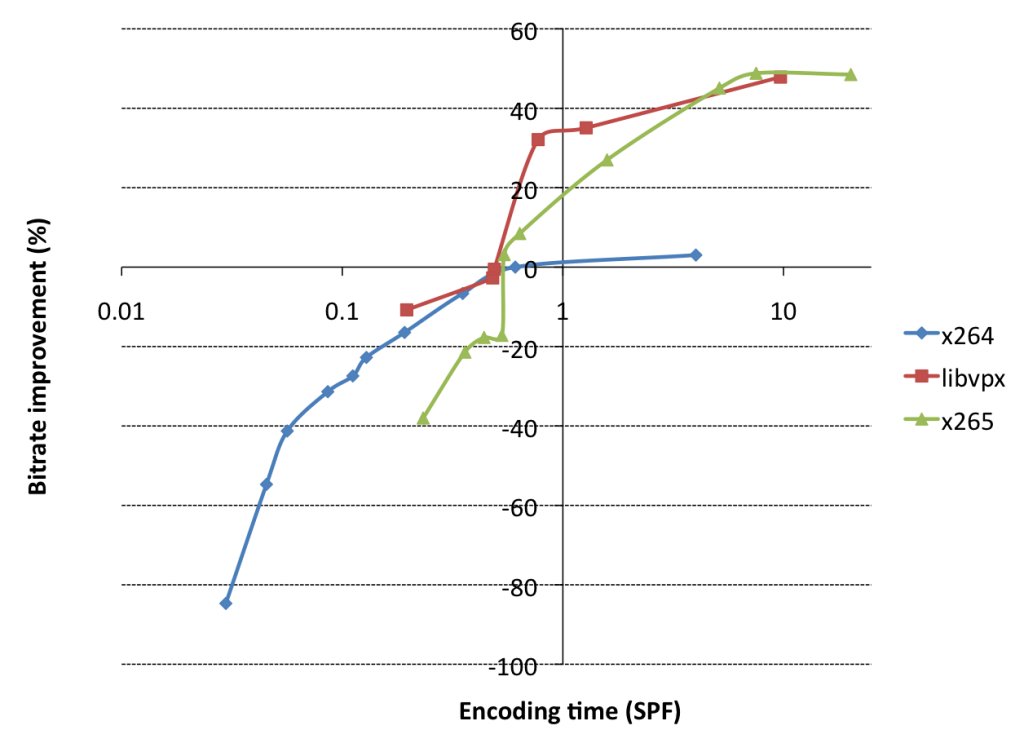

So what exactly does the above graph mean?

This graph shows the encoding time in seconds per frame on the horizontal axis. The vertical axis shows bitrate improvement, which compares a combination of SSIM and bitrate to a reference point set to x264 @ veryslow (as defined in the blog text). The reference point is why x264 doesn’t go far above 0%.

What does the graph tell us?

VP9 and H.265 are (as advertised) 50% better quality than H.264, but they are also 10 to 20 times slower. If you follow the blue line for x264 (AVC) you will see that it stays below the other two lines for the majority of the bitrate benchmark points. Not only that but both the green (H.265) and orange (VP9) lines intersect H.264 pretty early in their curves. That means that the seconds per frame rate will start to increase drastically and really drag down the stream performance. Thus while VP9 and H.265 show much better compression rates, it comes at a very high cost of encoding time which will greatly increase latency. A more in-depth analysis of encoding times and codec comparisons can be found in this University of Waterloo study.

Winner: H.264

CPU Consumption

As covered in the last section, both VP9 and H.265 have to run through more compression algorithms than H.264, which will subsequently increase their CPU usage when encoding with software. Even when fully optimized, live streaming is a CPU-intensive process, so increasing the already high usage will be a problem. However, there is something that can alleviate this: hardware support. Dedicated chipsets will reduce CPU consumption.

H.265 currently enjoys more hardware support, including Windows 10 (downloadable or through Intel Kaby Lake or newer processors), Apple (iOS 11), and Android (Android 5.0) devices. While most mobile devices support VP9, most other systems do not. Without direct hardware support, the VP9 encoding process will peg the CPU, consuming a large amount of resources, decreasing battery life, and potentially increasing latency.

As we will cover in the next sections, H.264 enjoys widespread support and doesn’t drain the CPU as much as VP9 or H.265 in the first place.

Winner: H.264 with H.265 close behind

Adoption and Browser Implementation

In order to work with the codec, hardware or software encoders need to be supported. H.265 suffered from a low adoption rate due in no small part to patent licensing. H.265 has four patent pools related to it: HEVC Advance, MPEG LA, Velos Media, and Technicolor. This makes it more expensive, which has discouraged adoption, thus limiting it to specific hardware encoders and mobile chipsets.

As of the 107 release, Google Chrome supports HEVC decoding by default. Google managed to integrate H.265 (HEVC) support into Chrome by leveraging hardware decoding capabilities available on many modern devices. This approach means that Chrome itself doesn’t decode HEVC content directly; instead, it relies on the device’s hardware (such as GPUs that support HEVC) to handle the decoding process. This method circumvents the need for Google to deal with HEVC’s licensing fees directly for software decoding, as those fees would typically be covered by the hardware manufacturers who include HEVC support in their devices. The support for HEVC in Chrome is available on platforms that already offer HEVC, including ChromeOS, Mac, Windows, Android, and Linux.

All that said, support for H.265 encoding in WebRTC applications within Google Chrome is a bit less clear. WebRTC standards mandate support for specific codecs like VP8 and H.264 for video communication, focusing on compatibility and broad support across different platforms and devices. Currently, the use of other codecs, such as HEVC for WebRTC applications running in browsers, is not widely supported. And even in the cases where it might work, it introduces compatibility issues with other WebRTC-based in-points that can’t decode the HEVC videos. Without H.265 in WebRTC supported, achieving real-time latency in browsers using this codec is difficult.

By contrast, VP9 is royalty-free and open-source which cleared the way for wider adoption. It is available in the major browsers Chrome, Firefox, and Edge as well as the operating systems Windows 10, Android 5.0, iOS 14, and macOS BigSur and newer. Until recently, Apple was a hold out, only just releasing support for VP9 on iOS 17 on iOS, iPadOS, and Mac Safari only on newer devices that include hardware encoding/decoding of the codec. All the other major browsers fully support VP9.

Although H.264 has one patent associated with it, as we mentioned earlier, in 2013, Cisco open-sourced its H.264 implementation and released it as a free binary download. That was a gigantic boost to the widespread implementation of H.264. As such, H.264 is supported by all of the browsers on laptops as well as mobile.

Winner: H.264 with VP9 closing the gap

Bandwidth Savings

The biggest advantage to increased compression rates and the resulting smaller file sizes is that video consumes less bandwidth when you broadcast it. This means that users with slower internet speeds are not limited by their internet connection and can still enjoy high-quality video streams.

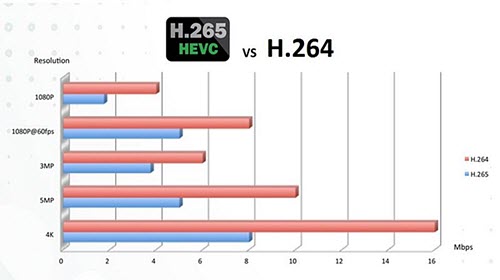

So which codec produces better compression efficiency to create smaller videos?

According to a test conducted by Netflix, H.265 outperforms VP9 by about 20%. Although other tests have produced different results, they all conclude that H.265 creates smaller file sizes. Depending on the objective metric used, H.265 provides 0.6% to 38.2% bitrate savings over VP9.

However, while consuming less bandwidth is useful, there are other factors that should be taken into consideration. Upload speeds across the globe average 42.63 Mbps for fixed broadband connections, which means that most places can support 4K streaming even with the higher connection speeds required by H.264. Despite the much lower average of 10.93 Mbps for mobile devices, they can still support 1080p streams.

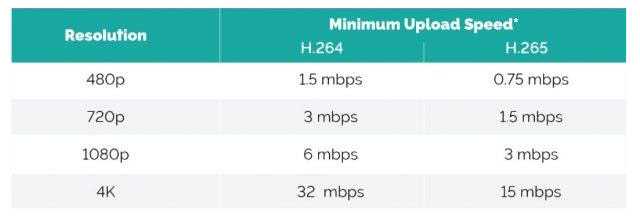

This diagram from Boxcast shows that the average worldwide connection speeds are definitely able to handle the upload speed requirements at all tiers of resolutions. Note: we couldn’t find a graph comparing all three codecs, but VP9 would fall in between H.264 and H.265.

Furthermore, there are ways to create streaming applications to cater to users in countries with slower internet speeds. You can do this by adding ABR and transcoding support. ABR (adaptive bitrate) will modify the bitrate to deliver the best experience. Transcoding splits broadcasts into multiple qualities so the client can request the best one depending on the available bandwidth.

You may be thinking, “What about mobile devices stuck on 2 or 3G connections?” The fortunate reality is that palm-sized devices don’t need to stream the highest resolutions to look good. 720p or even 480p will still display on a small screen with good quality.

While bandwidth consumption may not matter as much to a consumer, it must be acknowledged that companies will save money on bandwidth costs if they stream with VP9 or H.265. Smaller files result in lower costs for data streaming over CDN or cloud networks. While that is certainly nice, it is only at high-resolution settings such as 4K that halving the data consumption makes a substantial difference.

Of course, saving money is an important factor, no matter what the scale. That brings us to our next point, which will present the best of both worlds; better compression with the same performance.

Winner: H.265

Will LCEVC Change the Codec Game?

One alternative approach to providing better picture quality and detail in live video streams while simultaneously reducing the bandwidth needed is LCEVC (Low Complexity Enhancement Video Coding). This MPEG standard increases compression rates by about 40% for all codecs. This is due to the fact that it is an additional processing layer that works with existing and future versions of MPEG or other codecs such as VP9, H.265, and AV1. As we covered in a previous article, LCEVC has great potential to have a large impact on video streaming technology. Without having to change the composition of all the current protocols, LCEVC can make them more efficient in and of themselves.

From where things are now, it looks like content providers will be able to use LCEVC-enabled software or hardware-based encoders in combination with the Red5 Pro cross-cloud platform to unlock real-time streaming despite the processing-intensive video formats they are built with. Depending on which core codec is used, this applies not only to 4K and 8K UHD, but also to formats devised for 360-degree viewing, virtual reality, and other innovations.

One aspect driving the potentially universal adoption of LCEVC is that virtually any device can support a thin LCEVC client, whether downloaded independently to viewers’ devices or embedded in the service providers’ app player. Through its HTML5 JavaScript implementation, LCEVC also supports plugin-in-free browser support. This means that widespread implementation should theoretically be fairly straightforward. For now, though, LCEVC is still early, and other more basic approaches are proving more reliable and widely supported.

Why the Apple Vision Pro Might Help H.265

With the recent announcement and release of the Apple Vision Pro, Apple also introduced a very interesting new feature that’s based on the H.265 spec: the new stereo video specification 3D High Efficiency Video Coding (3D-HEVC). This allows users to watch 3D video in extended reality (XR) environment. Not only that, but Apple enabled spatial video capture on the iPhone 15 Pro, ensuring a new wave of 3D content will enter the pipeline.

Apple’s adoption of 3D-HEVC is a significant endorsement of the HEVC standard. By integrating 3D-HEVC into the Vision Pro, Apple is not only enhancing the immersive experience for users but also setting a new benchmark for content creators and technology providers. This move is likely to spur the development and distribution of 3D content, as producers will be eager to cater to the users of Apple’s cutting-edge XR device.

Similar to HLS adoption, Apple’s influence in the tech market means that its adoption of 3D-HEVC is leading to broader industry acceptance of this technology. Meta has already announced support for the 3D-HEVC format on the Quest 3. Other manufacturers, in an aim to remain competitive, are also likely to add 3D-HEVC compatibility to their devices, further cementing HEVC’s position as a leading video coding standard. This could lead to an increased demand for even more HEVC-compatible hardware and software solutions, boosting innovation and development within this space.

The potential success of a new device from Apple is one thing, but the iPhone’s ability to record 3D-HEVC is a game-changer, effectively giving millions of existing users a powerful camera and encoder for creating 3D video. At first glance, the ability to record 3D video may seem like a minor feature of the platform, but this one detail has the power to give a massive boost to 3D-HEVC adoption. It may not be long until we see 3D-HEVC content on YouTube, Twitch, and other large video platforms.

H.264, H.265, VP9, or Something Else?

After considering everything outlined above, AVC/H.264 is currently the best available option due to widespread adoption and fast encoding speeds. Although increasing compression and video picture quality are important considerations in regard to H.265 and VP9, the tradeoffs are currently just too severe. Specifically, high encoding times and voracious CPU consumption are significant roadblocks for live streaming video. Inefficient encoding is particularly harmful when targeting sub-500ms speeds.

That said, considering that VP9 is free and also enjoys widespread support, it will be a viable choice in the near future once faster software or hardware encoders are created. AV1 is poised to eventually replace VP9, but considering the astronomically high software encoding times it currently suffers from, there’s a lot of streamlining that needs to be done before it’s ready for expansive use. Of course, LCEVC could possibly circumvent the whole issue of changing codecs for better compression. Perhaps it will just serve as a longstanding bridge between H.264 and AV1.

Apple’s adoption of 3D-HEVC with the Vision Pro could also be a major boost for the H.265 video coding standard. It not only enhances the user experience by providing immersive 3D content but also signals a shift in the industry towards broader support for HEVC. As a result, HEVC’s relevance and longevity in the market are likely to increase, driving innovation and adoption across the tech and content creation landscapes.

However, this new innovation on H.265 doesn’t get rid of its patent restrictions. The need to negotiate with patent pools such as MPEG-LA, Velos Media, and HEVC Advance has caused H.265, and quite likely its successor, to have limited adoption, particularly from browser vendors like Chrome. The open VP9 license circumvents this but has its own issues with widespread adoption.

Nonetheless, AV1 is poised to replace H.264, H.265, and VP8/9. AV1 improves upon picture quality and compression over the other protocols. With video consumption on the rise, decreasing bandwidth constraints will make it much easier to send the high-quality videos that users are looking for. This is especially true for developing areas away from wired connections that are more dependent on cell phone connections. The consortium behind AV1 has all of the major players involved, and it’s royalty-free. All that is holding AV1 back right now is the lack of real-time encoders, but this is already beginning to evolve.

The support for AV1 hardware encoding and decoding across major hardware vendors like AMD, Intel, Apple, and NVIDIA is a strong indicator of the codec’s growing importance and adoption in the market. AMD’s RDNA 2 GPUs, excluding the Navi 24-based 6500 XT, along with NVIDIA’s GeForce 30- and 40-Series GPUs, have incorporated AV1 decoding capabilities. Intel has taken a step further by including AV1 hardware encoding support in its Arc Alchemist A Series graphics cards, positioning itself as a frontrunner in AV1 encoding capabilities. This move by Intel was notably followed by NVIDIA with its RTX 40-series Ada Lovelace GPUs, bringing AV1 encoding muscle into the fray. The expectation is that AMD’s upcoming RDNA3 GPUs will join this list, ensuring broad support for AV1 across major graphics hardware manufacturers. Apple has added AV1 decode support on the A17 Pro chip for iPhone 15, and we can only assume that future models will continue adding to the support for the codec moving forward.

It’s not just hardware vendors who are adding AV1 support. YouTube also uses the codec, notably offering improved video quality for live streams and enabling up to 4K 60FPS streaming at lower bitrates. YouTube began adding videos transcoded with the AV1 codec to a special playlist on September 14, 2018, and YouTube account settings allow one to prefer the codec for videos.

The adoption of AV1 by these industry giants signifies a push towards more efficient video streaming technologies, considering AV1’s benefits in terms of compression efficiency over predecessors like H.264 and HEVC. This is particularly relevant as content platforms and services seek ways to deliver high-quality video content more efficiently, reducing bandwidth consumption without compromising on visual quality. The widespread support for AV1 in hardware ensures that consumers will benefit from improved image quality and lower data usage across a wide range of devices and platforms. With these developments, AV1 is well on its way to becoming the standard codec for video streaming, thanks to its superior compression technology and the backing of the most influential players in the tech industry.

Once those become widely available, AV1 will be the way forward. We are getting quite close to AV1 being a reality.

Think we missed something in our analysis? Let us know by sending an email to info@red5.net or schedule a call with us to find out more about Red5.