If you’ve been keeping up with our recent blog posts, you’ll know that we have been working on an exciting new Watch Party project over the past few months. The Watch Party allows several users to join a group call where they can enjoy their favorite show, movie, or game together in real time. Since… Continue reading Watch Party: Episode II – Attack of the…Clowns? (Dynamic Overlays)

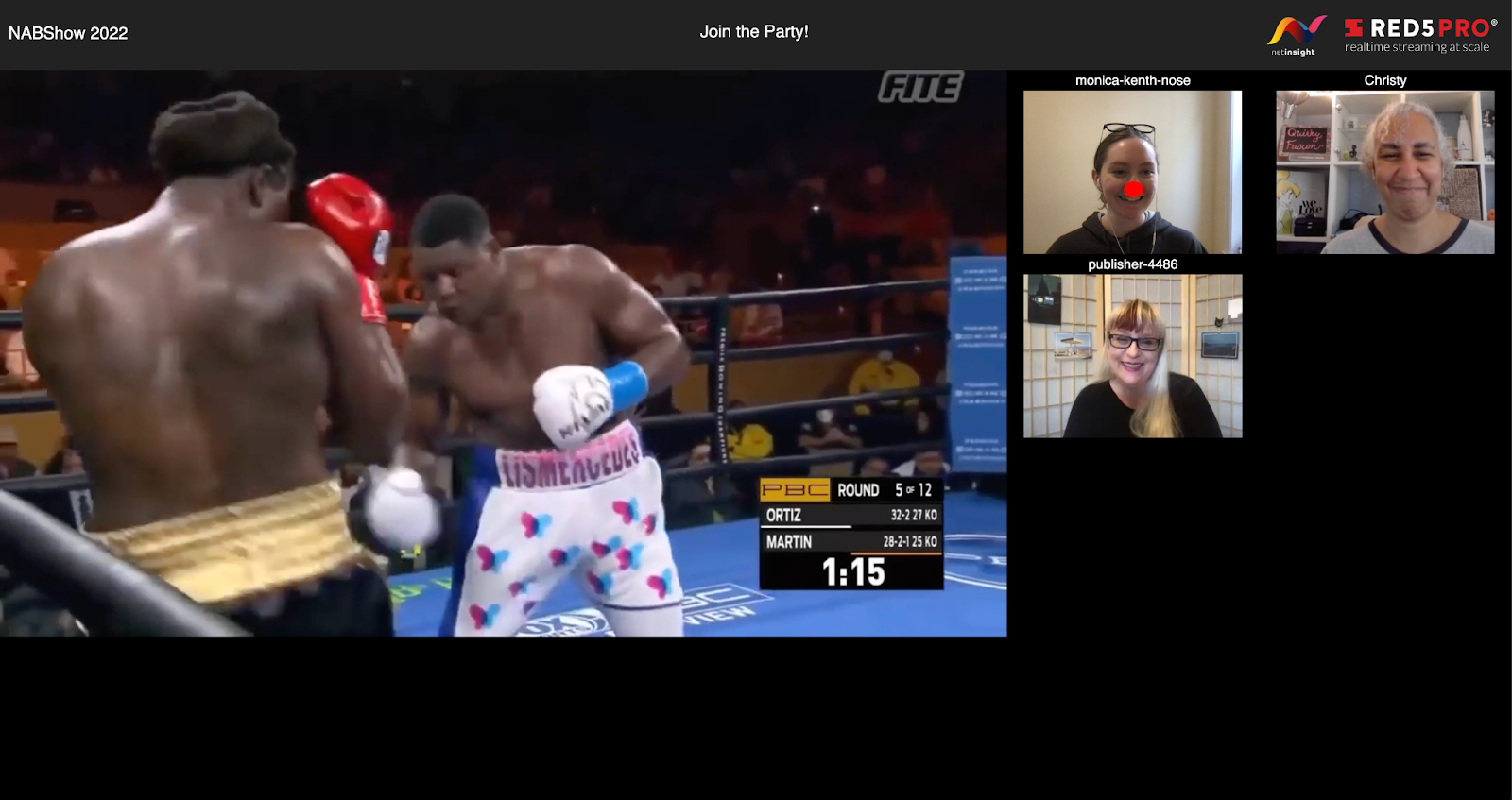

If you’ve been keeping up with our recent blog posts, you’ll know that we have been working on an exciting new Watch Party project over the past few months. The Watch Party allows several users to join a group call where they can enjoy their favorite show, movie, or game together in real time. Since our last post about the project, we have been working with the Net Insight team to create an even more interactive watch party experience… with a fun new Easter egg included. The Easter egg is a red nose dynamic overlay, which adds a clown nose to a user based on their stream name. Since this new feature gives us a laugh, we’ve decided to share how we did it. And it even serves a practical purpose (we promise)!

But first, how did this start? In a planning meeting for NAB, a representative from the Net Insight team created a concept drawing for a demo watch party, which showed a main video stream in the center, attendees to the right, and a bright red nose on one of the attendees. We like to keep our clients happy and be as detail-oriented as possible, so we made sure to add all of these features to the updated software.

The basics of the Watch Party setup were discussed in a previous post: How to Build a Virtual Watch Party. To add the red nose we used a browser-based face recognition API to discern the placement of an attendee’s facial features. In particular, we used Face Landmark Detection, which designates 68 different “landmark” points on a person’s face. The API provides a listing of points on different parts of the face, so we used the landmark listing for the nose to grab the nose points for our filter. Specifically, we used the position of the landmark that is just below the tip of the nose as a reference as we added a circle on a transparent canvas overlaid on top of the video. This dynamic overlay process attaches the red nose to the attendee’s face at the designated landmark.

The trigger for this feature is based on the stream name. For our Easter egg, when a certain word is present in the stream name, the software runs a regex (regular expression) on the stream to engage the face detection logic, grab the nose landmarks, and draw the circle.

For our demo we chose a browser-based API due to wide-spread accessibility and ease of implementation. It did introduce a few quirks, however, particularly related to video lighting. In order to work effectively, the attendee in question needs to have strong enough lighting on their broadcast for the library to discern their facial features. Using a server-side facial detection API could potentially improve these challenges. Server-side options are generally more robust; they are less computationally expensive and often include more vision libraries.

Although this might seem like a silly feature, it actually serves an important purpose. The red nose Easter egg is a great example of how Red5 Pro can work in conjunction with facial recognition software and overlays in order to add filters to a live video feed. Whether it is used for playful video filters, censorship overlays, or even to display statistics on screen during a broadcast, this software combination shows the versatility of Red5 Pro’s product.

With such a flexible design, Red5 Pro can be adapted to make any idea possible. What will you create? If you’re curious how Red5 Pro can be customized to turn your vision into reality, contact us at info@red5.net or schedule a call.